Table of Contents

- 1. About Cascading

- 2. Diving In

- 3. Data Processing

- 4. Executing Processes on Hadoop

- 5. Using and Developing Operations

- 6. Custom Taps and Schemes

- 7. Field Typing and Type Coercion

- 8. Advanced Processing

- 9. Built-In Operations

- 10. Built-in Assemblies

- 11. Best Practices

- 12. Extending Cascading

- 13. Cookbook

- 14. How It Works

List of Tables

List of Examples

- 2.1. Word Counting

- 3.1. Chaining Pipes

- 3.2. Merging Two Tuple Streams

- 3.3. Secondary Sorting

- 3.4. Reversing Secondary Sort Order

- 3.5. Reverse Order by Time

- 3.6. Joining Two Tuple Streams with Duplicate Field Names

- 3.7. Joining Two Tuple Streams

- 3.8. Pipe Scope

- 3.9. Step Scope

- 3.10. Creating a new tap

- 3.11. Overwriting An Existing Resource

- 3.12. Creating a new Flow

- 3.13. Binding taps in a Flow

- 3.14. Configuring the Application Jar

- 3.15. Creating a new Cascade

- 4.1. Configuring the Application Jar with a JobConf

- 4.2. Running a Cascading Application

- 5.1. Custom Function

- 5.2. Add Values Function

- 5.3. Add Values Function and Context

- 5.4. Custom Filter

- 5.5. String Length Filter

- 5.6. Custom Aggregator

- 5.7. Add Tuples Aggregator

- 5.8. Custom Buffer

- 5.9. Average Buffer

- 7.1. Constructor

- 7.2. Fluent

- 7.3. Declaring Typed Results

- 7.4. Date Type

- 8.1. Creating a SubAssembly

- 8.2. Using a SubAssembly

- 8.3. Creating a Split SubAssembly

- 8.4. Using a Split SubAssembly

- 8.5. Adding Assertions

- 8.6. Planning Out Assertions

- 8.7. Setting Traps

- 8.8. Adding a Checkpoint

- 8.9. Setting runID

- 8.10. Using a SumBy

- 8.11. Composing partials with AggregateBy

- 9.1. Combining Filters

- 10.1. Composing partials with AggregateBy

- 10.2. Using AverageBy

- 10.3. Using CountBy

- 10.4. Using SumBy

- 10.5. Using FirstBy

- 10.6. Using Coerce

- 10.7. Using Discard

- 10.8. Using Rename

- 10.9. Using Retain

- 10.10. Using Unique

Table of Contents

Cascading is a data processing API and processing query planner used for defining, sharing, and executing data-processing workflows on a single computing node or distributed computing cluster. On a single node, Cascading's "local mode" can be used to efficiently test code and process local files before being deployed on a cluster. On a distributed computing cluster using Apache Hadoop platform, Cascading adds an abstraction layer over the Hadoop API, greatly simplifying Hadoop application development, job creation, and job scheduling.

Cascading was developed to allow organizations to rapidly develop complex data processing applications with Hadoop. The need for Cascading is typically driven by one of two cases:

Increasing data size exceeds the processing capacity of a single computing system. In response, developers may adopt Apache Hadoop as the base computing infrastructure, but discover that developing useful applications on Hadoop is not trivial. Cascading eases the burden on these developers and allows them to rapidly create, refactor, test, and execute complex applications that scale linearly across a cluster of computers.

Increasing process complexity in data centers results in one-off data-processing applications sprawling haphazardly onto any available disk space or CPU. Apache Hadoop solves the problem with its Global Namespace file system, which provides a single reliable storage framework. In this scenario, Cascading eases the learning curve for developers as they convert their existing applications for execution on a Hadoop cluster for its reliability and scalability. In addition, it lets developers create reusable libraries and applications for use by analysts, who use them to extract data from the Hadoop file system.

Since Cascading's creation, a number of Domain Specific Languages (DSLs) have emerged as query languages that wrap the Cascading APIs, allowing developers and analysts to create ad-hoc queries for data mining and exploration. These DSLs coupled with Cascading local-mode allow users to rapidly query and analyze reasonably large datasets on their local systems before executing them at scale in a production environment. See the section on DSLs for references.

Cascading users typically fall into three roles:

The application Executor is a person (e.g., a developer or analyst) or process (e.g., a cron job) that runs a data processing application on a given cluster. This is typically done via the command line, using a prepackaged Java Jar file compiled against the Apache Hadoop and Cascading libraries. The application may accept command-line parameters to customize it for a given execution, and generally outputs a data set to be exported from the Hadoop file system for some specific purpose.

The process Assembler is a person who assembles data processing workflows into unique applications. This work is generally a development task that involves chaining together operations to act on one or more input data sets, producing one or more output data sets. This can be done with the raw Java Cascading API, or with a scripting language such as Scala, Clojure, Groovy, JRuby, or Jython (or by one of the DSLs implemented in these languages).

The operation Developer is a person who writes individual functions or operations (typically in Java) or reusable subassemblies that act on the data that passes through the data processing workflow. A simple example would be a parser that takes a string and converts it to an Integer. Operations are equivalent to Java functions in the sense that they take input arguments and return data. And they can execute at any granularity, from simply parsing a string to performing complex procedures on the argument data using third-party libraries.

All three roles can be filled by a developer, but because Cascading supports a clean separation of these responsibilities, some organizations may choose to use non-developers to run ad-hoc applications or build production processes on a Hadoop cluster.

From the Hadoop website, it “is a software platform that lets one easily write and run applications that process vast amounts of data”. Hadoop does this by providing a storage layer that holds vast amounts of data, and an execution layer that runs an application in parallel across the cluster, using coordinated subsets of the stored data.

The storage layer, called the Hadoop File System (HDFS), looks like a single storage volume that has been optimized for many concurrent serialized reads of large data files - where "large" might be measured in gigabytes or petabytes. However, it does have limitations. For example, random access to the data is not really possible in an efficient manner. And Hadoop only supports a single writer for output. But this limit helps make Hadoop very performant and reliable, in part because it allows for the data to be replicated across the cluster, reducing the chance of data loss.

The execution layer, called MapReduce, relies on a divide-and-conquer strategy to manage massive data sets and computing processes. Explaining MapReduce is beyond the scope of this document, but its complexity, and the difficulty of creating real-world applications against it, are the chief driving force behind the creation of Cascading.

Hadoop, according to its documentation, can be configured to run in three modes: standalone mode (i.e., on the local computer, useful for testing and debugging in an IDE), pseudo-distributed mode (i.e., on an emulated "cluster" of one computer, not useful for much), and fully-distributed mode (on a full cluster, for staging or production purposes). The pseudo-distributed mode does not add value for most purposes, and will not be discussed further. Cascading itself can run locally or on the Hadoop platform, where Hadoop itself may be in standalone or distributed mode. The primary difference between these two platforms, local or Hadoop, is that, when Cascading is running in local mode, it makes no use of Hadoop APIs and performs all of its work in memory, allowing it to be very fast - but consequently not as robust or scalable as when it is running on the Hadoop platform.

Apache Hadoop is an Open Source Apache project and is freely available. It can be downloaded from the Hadoop website: http://hadoop.apache.org/core/

Cascading 2.6 supports both Hadoop 1.x and 2.x by providing two

Java dependencies, cascading-hadoop.jar and

cascading-hadoop2-mr1.jar. These dependencies can

be interchanged but the hadoop2-mr1.jar introduces

new and deprecates older API calls where appropriate. It should be

pointed out hadoop1-mr1.jar only supports MapReduce

1 API conventions. With this naming scheme new API conventions can be

introduced without risk of naming collisions on dependencies.

The most common example presented to new Hadoop (and MapReduce) developers is an application that counts words. It is the Hadoop equivalent to a "Hello World" application.

In the word-counting application, a document is parsed into individual words and the frequency of each word is counted. In the last paragraph, for example, "is" appears twice and "equivalent" appears once.

The following code example uses Cascading to read each line of text from our document file, parse it into words, then count the number of times each word appears.

Example 2.1. Word Counting

// define source and sink Taps.

Scheme sourceScheme = new TextLine( new Fields( "line" ) );

Tap source = new Hfs( sourceScheme, inputPath );

Scheme sinkScheme = new TextDelimited( new Fields( "word", "count" ) );

Tap sink = new Hfs( sinkScheme, outputPath, SinkMode.REPLACE );

// the 'head' of the pipe assembly

Pipe assembly = new Pipe( "wordcount" );

// For each input Tuple

// parse out each word into a new Tuple with the field name "word"

// regular expressions are optional in Cascading

String regex = "(?<!\\pL)(?=\\pL)[^ ]*(?<=\\pL)(?!\\pL)";

Function function = new RegexGenerator( new Fields( "word" ), regex );

assembly = new Each( assembly, new Fields( "line" ), function );

// group the Tuple stream by the "word" value

assembly = new GroupBy( assembly, new Fields( "word" ) );

// For every Tuple group

// count the number of occurrences of "word" and store result in

// a field named "count"

Aggregator count = new Count( new Fields( "count" ) );

assembly = new Every( assembly, count );

// initialize app properties, tell Hadoop which jar file to use

Properties properties = AppProps.appProps()

.setName( "word-count-application" )

.setJarClass( Main.class )

.buildProperties();

// plan a new Flow from the assembly using the source and sink Taps

// with the above properties

FlowConnector flowConnector = new HadoopFlowConnector( properties );

Flow flow = flowConnector.connect( "word-count", source, sink, assembly );

// execute the flow, block until complete

flow.complete();

Several features of this example are worth highlighting.

First, notice that the pipe assembly is not coupled to the data

(i.e., the Tap instances) until the last moment

before execution. File paths or references are not embedded in the pipe

assembly; instead, the pipe assembly is specified independent of data

inputs and outputs. The only dependency is the data scheme, i.e., the

field names. In Cascading, every input or output file has field names

associated with it, and every processing element of the pipe assembly

either expects the specified fields or creates them. This allows

developers to easily self-document their code, and allows the Cascading

planner to "fail fast" if an expected dependency between elements isn't

satisfied - for instance, if a needed field name is missing or incorrect.

(If more information is desired on the planner, see MapReduce Job Planner.)

Also notice that pipe assemblies are assembled through constructor chaining. This may seem odd, but it is done for two reasons. First, it keeps the code more concise. Second, it prevents developers from creating "cycles" (i.e., recursive loops) in the resulting pipe assembly. Pipe assemblies are intended to be Directed Acyclic Graphs (DAG's), and in keeping with this, the Cascading planner is not designed to handle processes that feed themselves. (If desired, there are safer approaches to achieving this result.

Finally, notice that the very first Pipe instance has a

name. That instance is the head of this

particular pipe assembly. Pipe assemblies can have any number of heads,

and any number of tails. Although the

tail in this example does not have a name, in a more complex assembly it

would. In general, heads and tails of pipe assemblies are assigned names

to disambiguate them. One reason is that names are used to bind sources

and sinks to pipes during planning. (The example above is an exception,

because there is only one head and one tail - and consequently only one

source and one sink - so the binding is unmistakable.) Another reason is

that the naming of pipes contributes to self-documentation of pipe

assemblies, especially where there are splits, joins, and merges in the

assembly.

To sum up, the example word-counting application will:

-

Read each line of text from a file and give it the field name "line"

-

parse each "line" into words with the

RegexGeneratorobject, which returns each word in the field named "word" -

sort and group all the tuples on the "word" field, using the

GroupByobject -

count the number of elements in each group, using the

Countobject, and store this value in the "count" field -

and write out the "word" and "count" fields.

Table of Contents

The Cascading processing model is based on a metaphor of pipes (data streams) and filters (data operations). Thus the Cascading API allows the developer to assemble pipe assemblies that split, merge, group, or join streams of data while applying operations to each data record or groups of records.

In Cascading, we call a data record a tuple, a simple chain of pipes without forks or merges a branch, an interconnected set of pipe branches a pipe assembly, and a series of tuples passing through a pipe branch or assembly a tuple stream.

Pipe assemblies are specified independently of the data source they are to process. So before a pipe assembly can be executed, it must be bound to taps, i.e., data sources and sinks. The result of binding one or more pipe assemblies to taps is a flow, which is executed on a computer or cluster using the Hadoop framework.

Multiple flows can be grouped together and executed as a single process. In this context, if one flow depends on the output of another, it is not executed until all of its data dependencies are satisfied. Such a collection of flows is called a cascade.

Pipe assemblies define what work should be done against tuple streams, which are read from tap sources and written to tap sinks. The work performed on the data stream may include actions such as filtering, transforming, organizing, and calculating. Pipe assemblies may use multiple sources and multiple sinks, and may define splits, merges, and joins to manipulate the tuple streams.

Pipe assemblies are created by chaining

cascading.pipe.Pipe classes and subclasses

together. Chaining is accomplished by passing the previous

Pipe instances to the constructor of the next

Pipe instance.

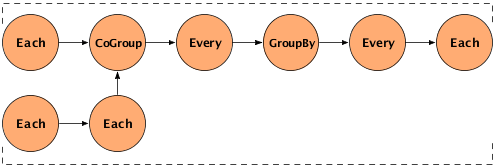

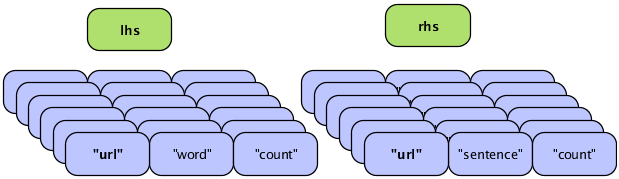

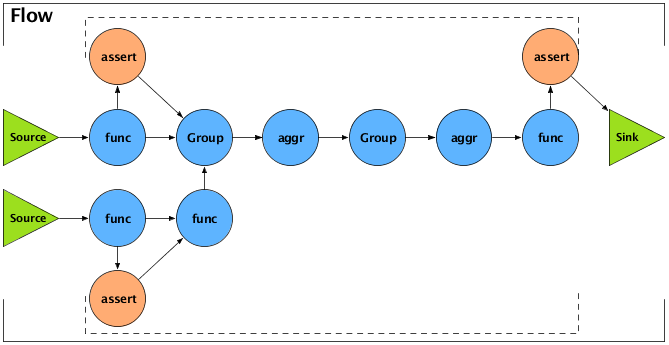

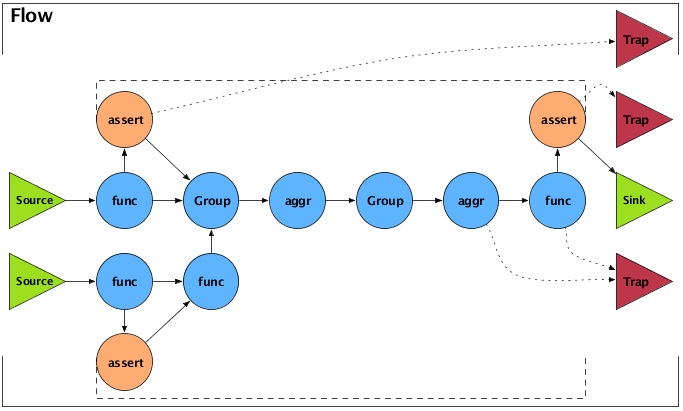

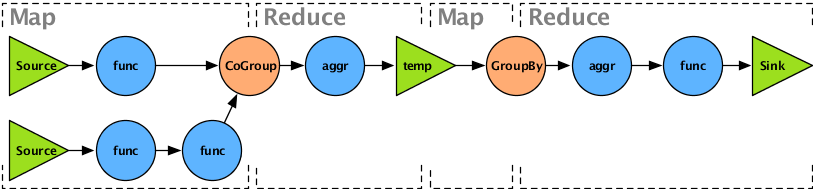

The following example demonstrates this type of chaining. It creates two pipes - a "left-hand side" (lhs) and a "right-hand side" (rhs) - and performs some processing on them both, using the Each pipe. Then it joins the two pipes into one, using the CoGroup pipe, and performs several operations on the joined pipe using Every and GroupBy. The specific operations performed are not important in the example; the point is to show the general flow of the data streams. The diagram after the example gives a visual representation of the workflow.

Example 3.1. Chaining Pipes

// the "left hand side" assembly head

Pipe lhs = new Pipe( "lhs" );

lhs = new Each( lhs, new SomeFunction() );

lhs = new Each( lhs, new SomeFilter() );

// the "right hand side" assembly head

Pipe rhs = new Pipe( "rhs" );

rhs = new Each( rhs, new SomeFunction() );

// joins the lhs and rhs

Pipe join = new CoGroup( lhs, rhs );

join = new Every( join, new SomeAggregator() );

join = new GroupBy( join );

join = new Every( join, new SomeAggregator() );

// the tail of the assembly

join = new Each( join, new SomeFunction() );

The following diagram is a visual representation of the example above.

As data moves through the pipe, streams may be separated or combined for various purposes. Here are the three basic patterns:

- Split

-

A split takes a single stream and sends it down multiple paths - that is, it feeds a single

Pipeinstance into two or more subsequent separatePipeinstances with unique branch names. - Merge

-

A merge combines two or more streams that have identical fields into a single stream. This is done by passing two or more

Pipeinstances to aMergeorGroupBypipe. - Join

-

A join combines data from two or more streams that have different fields, based on common field values (analogous to a SQL join.) This is done by passing two or more

Pipeinstances to aHashJoinorCoGrouppipe. The code sequence and diagram above give an example.

In addition to directing the tuple streams - using splits, merges, and joins - pipe assemblies can examine, filter, organize, and transform the tuple data as the streams move through the pipe assemblies. To facilitate this, the values in the tuple are typically given field names, just as database columns are given names, so that they may be referenced or selected. The following terminology is used:

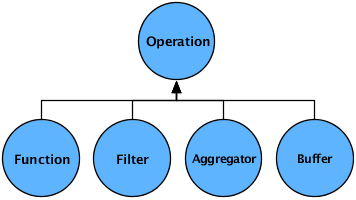

- Operation

-

Operations (

cascading.operation.Operation) accept an input argument Tuple, and output zero or more result tuples. There are a few sub-types of operations defined below. Cascading has a number of generic Operations that can be used, or developers can create their own custom Operations. - Tuple

-

In Cascading, data is processed as a stream of Tuples (

cascading.tuple.Tuple), which are composed of fields, much like a database record or row. A Tuple is effectively an array of (field) values, where each value can be anyjava.lang.ObjectJava type (orbyte[]array). For information on supporting non-primitive types, see Custom Types. - Fields

-

Fields (

cascading.tuple.Fields) are used either to declare the field names for fields in a Tuple, or reference field values in a Tuple. They can either be strings (such as "firstname" or "birthdate"), integers (for the field position, starting at0for the first position, or starting at-1for the last position), or one of the predefined Fields sets (such asFields.ALL, which selects all values in the Tuple, like an asterisk in SQL). For more on Fields sets, see Field Algebra).

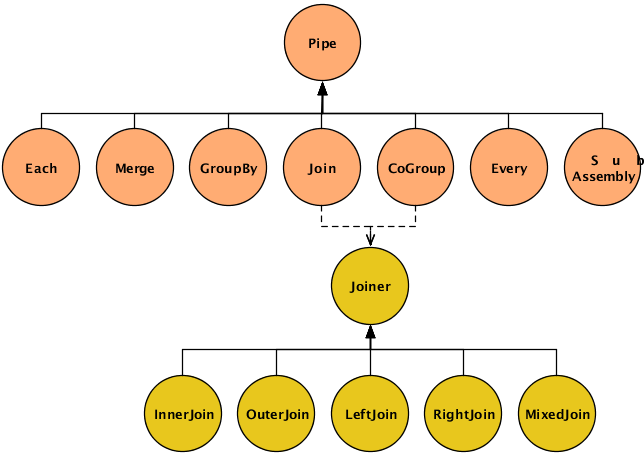

The code for the sample pipe assembly above, Chaining Pipes, consists almost entirely of a series of

Pipe constructors. This section describes the

various Pipe classes in detail. The base class

cascading.pipe.Pipe and its subclasses are shown

in the diagram below.

The Pipe class

is used to instantiate and name a pipe. Pipe names are

used by the planner to bind taps to the pipe as sources or sinks. (A

third option is to bind a tap to the pipe branch as a trap, discussed elsewhere as an advanced

topic.)

The SubAssembly

subclass is a special type of pipe. It is used to nest

re-usable pipe assemblies within a Pipe class

for inclusion in a larger pipe assembly. For more information on

this, see the section on SubAssemblies.

The other six types of pipes are used to perform operations on the tuple streams as they pass through the pipe assemblies. This may involve operating on the individual tuples (e.g., transform or filter), on groups of related tuples (e.g., count or subtotal), or on entire streams (e.g., split, combine, group, or sort). These six pipe types are briefly introduced here, then explored in detail further below.

Each-

These pipes perform operations based on the data contents of tuples - analyze, transform, or filter. The

Eachpipe operates on individual tuples in the stream, applying functions or filters such as conditionally replacing certain field values, removing tuples that have values outside a target range, etc.You can also use

Eachto split or branch a stream, simply by routing the output of anEachinto a different pipe or sink.Note that with

Each, as with other types of pipe, you can specify a list of fields to output, thereby removing unwanted fields from a stream. Merge-

Just as

Eachcan be used to split one stream into two,Mergecan be used to combine two or more streams into one, as long as they have the same fields.A

Mergeaccepts two or more streams that have identical fields, and emits a single stream of tuples (in arbitrary order) that contains all the tuples from all the specified input streams. Thus a Merge is just a mingling of all the tuples from the input streams, as if shuffling multiple card decks into one.Use

Mergewhen no grouping is required (i.e., no aggregator or buffer operations will be performed).Mergeis much faster thanGroupBy(see below) for merging.To combine streams that have different fields, based on one or more common values, use

CoGrouporHashJoin. GroupBy-

GroupBygroups the tuples of a stream based on common values in a specified field.If passed multiple streams as inputs, it performs a merge before the grouping. As with

Merge, aGroupByrequires that multiple input streams share the same field structure.The purpose of grouping is typically to prepare a stream for processing by the

Everypipe, which performs aggregator and buffer operations on the groups, such as counting, totalling, or averaging values within that group.It should be clear that "grouping" here essentially means sorting all the tuples into groups based on the value of a particular field. However, within a given group, the tuples are in arbitrary order unless you specify a secondary sort key. For most purposes, a secondary sort is not required and only increases the execution time.

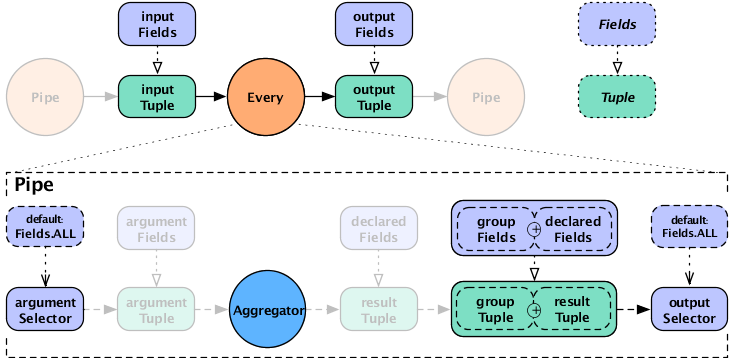

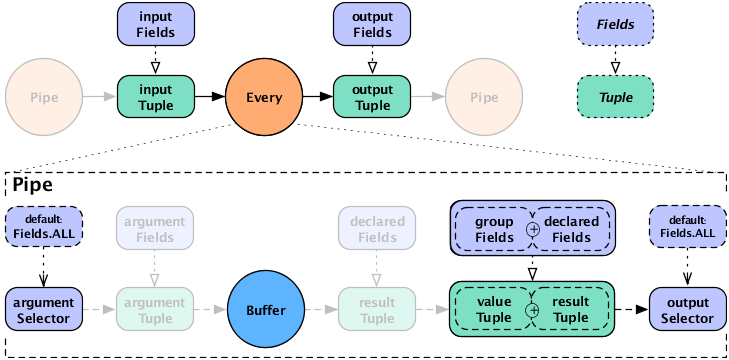

Every-

The

Everypipe operates on a tuple stream that has been grouped (byGroupByorCoGroup) on the values of a particular field, such as timestamp or zipcode. It's used to apply aggregator or buffer operations such as counting, totaling, or averaging field values within each group. Thus theEveryclass is only for use on the output ofGroupByorCoGroup, and cannot be used with the output ofEach,Merge, orHashJoin.An

Everyinstance may follow anotherEveryinstance, soAggregatoroperations can be chained. This is not true forBufferoperations. CoGroup-

CoGroupperforms a join on two or more streams, similar to a SQL join, and groups the single resulting output stream on the value of a specified field. As with SQL, the join can be inner, outer, left, or right. Self-joins are permitted, as well as mixed joins (for three or more streams) and custom joins. Null fields in the input streams become corresponding null fields in the output stream.The resulting output stream contains fields from all the input streams. If the streams contain any field names in common, they must be renamed to avoid duplicate field names in the resulting tuples.

HashJoin-

HashJoinperforms a join on two or more streams, similar to a SQL join, and emits a single stream in arbitrary order. As with SQL, the join can be inner, outer, left, or right. Self-joins are permitted, as well as mixed joins (for three or more streams) and custom joins. Null fields in the input streams become corresponding null fields in the output stream.For applications that do not require grouping,

HashJoinprovides faster execution thanCoGroup, but only within certain prescribed cases. It is optimized for joining one or more small streams to no more than one large stream. Developers should thoroughly understand the limitations of this class, as described below, before attempting to use it.

The following table summarizes the different types of pipes.

Table 3.1. Comparison of pipe types

| Pipe type | Purpose | Input | Output |

Pipe | instantiate a pipe; create or name a branch | name | a (named) pipe |

SubAssembly | create nested subassemblies | ||

Each | apply a filter or function, or branch a stream | tuple stream (grouped or not) | a tuple stream, optionally filtered or transformed |

Merge | merge two or more streams with identical fields | two or more tuple streams | a tuple stream, unsorted |

GroupBy | sort/group on field values; optionally merge two or more streams with identical fields | one or more tuple streams with identical fields | a single tuple stream, grouped on key field(s) with optional secondary sort |

Every | apply aggregator or buffer operation | grouped tuple stream | a tuple stream plus new fields with operation results |

CoGroup | join 1 or more streams on matching field values | one or more tuple streams | a single tuple stream, joined on key field(s) |

HashJoin | join 1 or more streams on matching field values | one or more tuple streams | a tuple stream in arbitrary order |

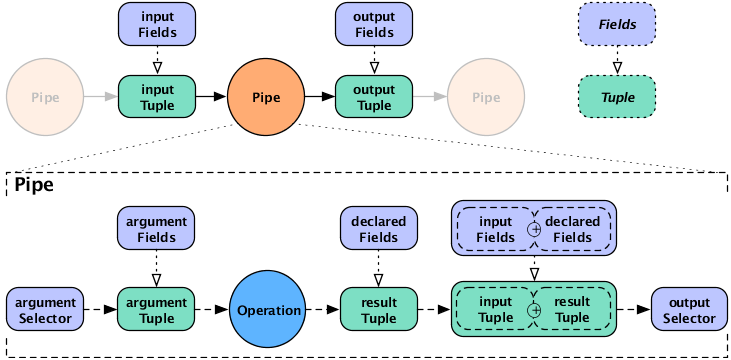

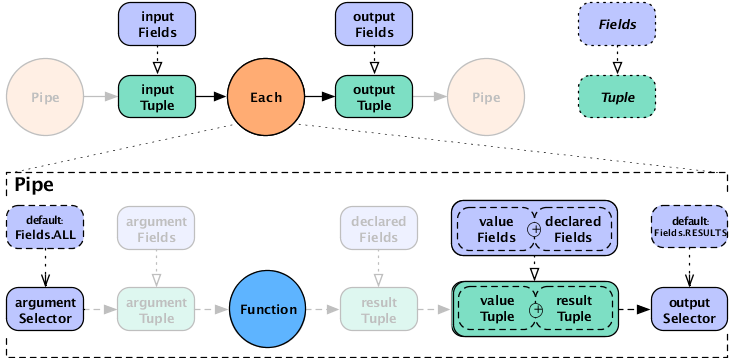

The Each and Every

pipes perform operations on tuple data - for instance, perform a

search-and-replace on tuple contents, filter out some of the tuples

based on their contents, or count the number of tuples in a stream

that share a common field value.

Here is the syntax for these pipes:

new Each( previousPipe, argumentSelector, operation, outputSelector )

new Every( previousPipe, argumentSelector, operation, outputSelector )

Both types take four arguments:

-

a Pipe instance

-

an argument selector

-

an Operation instance

-

an output selector on the constructor (selectors here are Fields instances)

The key difference between Each and

Every is that the Each

operates on individual tuples, and Every

operates on groups of tuples emitted by GroupBy

or CoGroup. This affects the kind of operations

that these two pipes can perform, and the kind of output they produce

as a result.

The Each pipe applies operations that are

subclasses of Functions and

Filters (described in the Javadoc). For

example, using Each you can parse lines from a

logfile into their constituent fields, filter out all lines except the

HTTP GET requests, and replace the timestring fields with date

fields.

Similarly, since the Every pipe works on

tuple groups (the output of a GroupBy or

CoGroup pipe), it applies operations that are

subclasses of Aggregators and

Buffers. For example, you could use

GroupBy to group the output of the above

Each pipe by date, then use an

Every pipe to count the GET requests per date.

The pipe would then emit the operation results as the date and count

for each group.

In the syntax shown at the start of this section, the argument selector specifies fields from the

input tuple to use as input values. If the argument selector is not

specified, the whole input tuple (Fields.ALL) is passed

to the operation as a set of argument values.

Most Operation subclasses declare result

fields (shown as "declared fields" in the diagram). The output selector specifies the fields of the

output Tuple from the fields of the input

Tuple and the operation result. This new output

Tuple becomes the input

Tuple to the next pipe in the pipe assembly. If

the output selector is Fields.ALL, the output is the

input Tuple plus the operation result, merged

into a single Tuple.

Note that it's possible for a Function or

Aggregator to return more than one output

Tuple per input Tuple.

In this case, the input tuple is duplicated as many times as necessary

to create the necessary output tuples. This is similar to the

reiteration of values that happens during a join. If a function is

designed to always emit three result tuples for every input tuple,

each of the three outgoing tuples will consist of the selected input

tuple values plus one of the three sets of function result

values.

If the result selector is not specified for an

Each pipe performing a

Functions operation, the operation results are

returned by default (Fields.RESULTS), discarding the

input tuple values in the tuple stream. (This is not true of

Filters , which either discard the input tuple

or return it intact, and thus do not use an output selector.)

For the Every pipe, the Aggregator

results are appended to the input Tuple (Fields.ALL) by

default.

Note that the Every pipe associates

Aggregator results with the current group

Tuple (the unique keys currently being grouped

on). For example, if you are grouping on the field "department" and

counting the number of "names" grouped by that department, the

resulting output Fields will be ["department","num_employees"].

If you are also adding up the salaries associated with each "name" in each "department", the output Fields will be ["department","num_employees","total_salaries"].

This is only true for chains of

Aggregator Operations - you are not allowed to

chain Buffer operations, as explained

below.

When the Every pipe is used with a

Buffer operation, instead of an

Aggregator, the behavior is different. Instead

of being associated with the current grouping tuple, the operation

results are associated with the current values tuple. This is

analogous to how an Each pipe works with a

Function. This approach may seem slightly

unintuitive, but provides much more flexibility. To put it another

way, the results of the buffer operation are not appended to the

current keys being grouped on. It is up to the buffer to emit them if

they are relevant. It is also possible for a Buffer to emit more than

one result Tuple per unique grouping. That is, a Buffer may or may not

emulate an Aggregator, where an Aggregator is just a special optimized

case of a Buffer.

For more information on how operations process fields, see Operations and Field-processing .

The Merge pipe is very simple. It accepts

two or more streams that have the same fields, and emits a single

stream containing all the tuples from all the input streams. Thus a

merge is just a mingling of all the tuples from the input streams, as

if shuffling multiple card decks into one. Note that the output of

Merge is in arbitrary order.

The example above simply combines all the tuples from two existing streams ("lhs" and "rhs") into a new tuple stream ("merge").

GroupBy groups the tuples of a stream

based on common values in a specified field. If passed multiple

streams as inputs, it performs a merge before the grouping. As with

Merge, a GroupBy

requires that multiple input streams share the same field

structure.

The output of GroupBy is suitable for the

Every pipe, which performs

Aggregator and Buffer

operations, such as counting, totalling, or averaging groups of tuples

that have a common value (e.g., the same date). By default,

GroupBy performs no secondary sort, so within

each group the tuples are in arbitrary order. For instance, when

grouping on "lastname", the tuples [doe, john] and

[doe, jane] end up in the same group, but in arbitrary

sequence.

If multi-level sorting is desired, the names of the sort

fields on must be specified to the GroupBy

instance, as seen below. In this example, value1 and

value2 will arrive in their natural sort order

(assuming they are

java.lang.Comparable).

Example 3.3. Secondary Sorting

Fields groupFields = new Fields( "group1", "group2" );

Fields sortFields = new Fields( "value1", "value2" );

Pipe groupBy = new GroupBy( assembly, groupFields, sortFields );

If we don't care about the order of value2, we

can leave it out of the sortFields

Fields constructor.

In the next example, we reverse the order of

value1 while keeping the natural order of

value2.

Example 3.4. Reversing Secondary Sort Order

Fields groupFields = new Fields( "group1", "group2" );

Fields sortFields = new Fields( "value1", "value2" );

sortFields.setComparator( "value1", Collections.reverseOrder() );

Pipe groupBy = new GroupBy( assembly, groupFields, sortFields );

Whenever there is an implied sort during grouping or secondary

sorting, a custom java.util.Comparator can

optionally be supplied to the grouping Fields

or secondary sort Fields. This allows the

developer to use the Fields.setComparator() call to

control the sort.

To sort or group on non-Java-comparable classes, consider

creating a custom Comparator.

Below is a more practical example, where we group by the "day of the year", but want to reverse the order of the tuples within that grouping by "time of day".

Example 3.5. Reverse Order by Time

Fields groupFields = new Fields( "year", "month", "day" );

Fields sortFields = new Fields( "hour", "minute", "second" );

sortFields.setComparators(

Collections.reverseOrder(), // hour

Collections.reverseOrder(), // minute

Collections.reverseOrder() ); // second

Pipe groupBy = new GroupBy( assembly, groupFields, sortFields );

The CoGroup pipe is similar to

GroupBy, but instead of a merge, performs a

join. That is, CoGroup accepts two or more

input streams and groups them on one or more specified keys, and

performs a join operation on equal key values, similar to a SQL

join.

The output stream contains all the fields from all the input streams.

As with SQL, the join can be inner, outer, left, or right. Self-joins are permitted, as well as mixed joins (for three or more streams) and custom joins. Null fields in the input streams become corresponding null fields in the output stream.

Since the output is grouped, it is suitable for the

Every pipe, which performs

Aggregator and Buffer

operations - such as counting, totalling, or averaging groups of

tuples that have a common value (e.g., the same date).

The output stream is sorted by the natural order of the grouping

fields. To control this order, at least the first

groupingFields value given should be an

instance of Fields containing

Comparator instances for the appropriate

fields. This allows fine-grained control of the sort grouping

order.

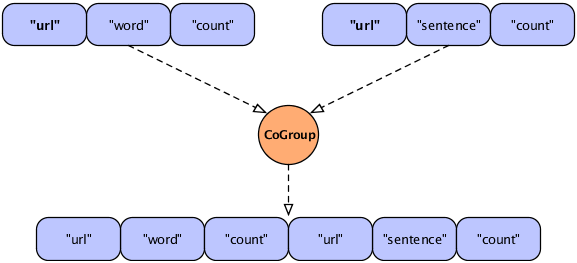

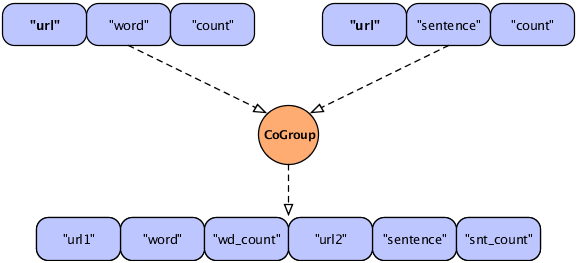

In a join operation, all the field names used in any of the input tuples must be unique; duplicate field names are not allowed. If the names overlap there is a collision, as shown in the following diagram.

In this figure, two streams are to be joined on the "url"

field, resulting in a new Tuple that contains fields from the two

input tuples. However, the resulting tuple would include two fields

with the same name ("url"), which is unworkable. To handle the

conflict, developers can use the

declaredFields argument (described in the

Javadoc) to declare unique field names for the output tuple, as in

the following example.

Example 3.6. Joining Two Tuple Streams with Duplicate Field Names

Fields common = new Fields( "url" );

Fields declared = new Fields(

"url1", "word", "wd_count", "url2", "sentence", "snt_count"

);

Pipe join =

new CoGroup( lhs, common, rhs, common, declared, new InnerJoin() );

This revised figure demonstrates the use of declared field names to prevent a planning failure.

It might seem preferable for Cascading to automatically

recognize the duplication and simply merge the identically-named

fields, saving effort for the developer. However, consider the case

of an outer type join in which one field (or set of fields used for

the join) for a given join side happens to be null.

Discarding one of the duplicate fields would lose this

information.

Further, the internal implementation relies on field position,

not field names, when reading tuples; the field names are a device

for the developer. This approach allows the behavior of the

CoGroup to be deterministic and

predictable.

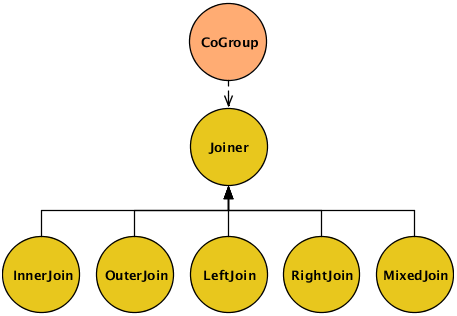

In the example above, we explicitly specified a Joiner class (InnerJoin) to perform a join on our data. There are five Joiner subclasses, as shown in this diagram.

In CoGroup, the join is performed after

all the input streams are first co-grouped by their common keys.

Cascading must create a "bag" of data for every grouping in the

input streams, consisting of all the Tuple

instances associated with that grouping.

It's already been mentioned that joins in Cascading are

analogous to joins in SQL. The most commonly-used type of join is

the inner join, the default in CoGroup. An

inner join tries to match each

Tuple on the "lhs" with every

Tuple on the "rhs", based on matching field values. With an inner

join, if either side has no tuples for a given value, no tuples are

joined. An outer join, conversely, allows for either side to be

empty and simply substitutes a Tuple

containing null values for the non-existent

tuple.

This sample data is used in the discussion below to explain and compare the different types of join:

LHS = [0,a] [1,b] [2,c] RHS = [0,A] [2,C] [3,D]

In each join type below, the values

are joined on the first tuple position (the join key), a numeric

value. Note that, when Cascading joins tuples, the resulting

Tuple contains all the incoming values from

in incoming tuple streams, and does not discard the duplicate key

fields. As mentioned above, on outer joins where there is no

equivalent key in the alternate stream, null values are

used.

For example using the data above, the result Tuple of an

"inner" join with join key value of 2 would be

[2,c,2,C]. The result Tuple of an "outer" join with

join key value of 1 would be

[1,b,null,null].

- InnerJoin

-

An inner join only returns a joined

Tupleif neither bag for the join key is empty.[0,a,0,A] [2,c,2,C]

- OuterJoin

-

An outer join performs a join if one bag (left or right) for the join key is empty, or if neither bag is empty.

[0,a,0,A] [1,b,null,null] [2,c,2,C] [null,null,3,D]

- LeftJoin

-

A left join can also be stated as a left inner and right outer join, where it is acceptable for the right bag to be empty (but not the left).

[0,a,0,A] [1,b,null,null] [2,c,2,C]

- RightJoin

-

A right join can also be stated as a left outer and right inner join, where it is acceptable for the left bag to be empty (but not the right).

[0,a,0,A] [2,c,2,C] [null,null,3,D]

- MixedJoin

-

A mixed join is where 3 or more tuple streams are joined, using a small Boolean array to specify each of the join types to use. For more information, see the

cascading.pipe.cogroup.MixedJoinclass in the Javadoc. - Custom

-

Developers can subclass the

cascading.pipe.cogroup.Joinerclass to create custom join operations.

CoGroup attempts to store the entire

current unique keys tuple "bag" from the right-hand stream in memory

for rapid joining to the left-hand stream. If the bag is very large,

it may exceed a configurable threshold and be spilled to disk,

reducing performance and potentially causing a memory error (if the

threshold value is too large). Thus it's usually best to put the

stream with the largest groupings on the left-hand side and, if

necessary, adjust the spill threshold as described in the

Javadoc.

HashJoin performs a join (similar to a

SQL join) on two or more streams, and emits a stream of tuples that

contain fields from all of the input streams. With a join, the tuples

in the different input streams do not typically contain the same set

of fields.

As with CoGroup, the field names must

all be unique, including the names of the key fields, to avoid

duplicate field names in the emitted Tuple. If

necessary, use the declaredFields argument to

specify unique field names for the output.

An inner join is performed by default, but you can choose inner, outer, left, right, or mixed (three or more streams). Self-joins are permitted. Developers can also create custom Joiners if desired. For more information on types of joins, refer to the section called “The Joiner class” or the Javadoc.

Example 3.7. Joining Two Tuple Streams

Fields lhsFields = new Fields( "fieldA", "fieldB" );

Fields rhsFields = new Fields( "fieldC", "fieldD" );

Pipe join =

new HashJoin( lhs, lhsFields, rhs, rhsFields, new InnerJoin() );

The example above performs an inner join on two streams ("lhs"

and "rhs"), based on common values in two fields. The field names that

are specified in lhsFields and

rhsFields are among the field names previously

declared for the two input streams.

For joins that do not require grouping,

HashJoin provides faster execution than

CoGroup, but it operates within stricter

limitations. It is optimized for joining one or more small streams

to no more than one large stream.

Unlike CoGroup,

HashJoin attempts to keep the entire

right-hand stream in memory for rapid comparison (not just the

current grouping, as no grouping is performed for a

HashJoin). Thus a very large tuple stream in

the right-hand stream may exceed a configurable spill-to-disk

threshold, reducing performance and potentially causing a memory

error. For this reason, it's advisable to use the smaller stream on

the right-hand side. Additionally, it may be helpful to adjust the

spill threshold as described in the Javadoc.

Due to the potential difficulties of using

HashJoin (as compared to the slower but much

more reliable CoGroup), developers should

thoroughly understand this class before attempting to use it.

Frequently the HashJoin is fed a

filtered down stream of Tuples from what was originally a very large

file. To prevent the large file from being replicated throughout a

cluster, when running in Hadoop mode, use a

Checkpoint pipe at the point where the data

has been filtered down to its smallest before it is streamed into a

HashJoin. This will force the Tuple stream to

be persisted to disk and new FlowStep

(MapReduce job) to be created to read the smaller data size more

efficiently.

By default, the properties passed to a FlowConnector subclass become the defaults for every Flow instance created by that FlowConnector. In the past, if some of the Flow instances needed different properties, it was necessary to create additional FlowConnectors to set those properties. However, it is now possible to set properties at the Pipe scope and at the process FlowStep scope.

Setting properties at the Pipe scope lets you set a property that is only visible to a given Pipe instance (and its child Operation). This allows Operations such as custom Functions to be dynamically configured.

More importantly, setting properties at the process FlowStep scope allows you to set properties on a Pipe that are inherited by the underlying process during runtime. When running on the Apache Hadoop platform (i.e., when using the HadoopFlowConnector), a FlowStep is the current MapReduce job. Thus a Hadoop-specific property can be set on a Pipe, such as a CoGroup. During runtime, the FlowStep (MapReduce job) that the CoGroup executes in is configured with the given property - for example, a spill threshold, or the number of reducer tasks for Hadoop to deploy.

The following code samples demonstrates the basic form for both the Pipe scope and the process FlowStep scope.

Example 3.8. Pipe Scope

Pipe join =

new HashJoin( lhs, common, rhs, common, declared, new InnerJoin() );

SpillableProps props = SpillableProps.spillableProps()

.setCompressSpill( true )

.setMapSpillThreshold( 50 * 1000 );

props.setProperties( join.getConfigDef(), ConfigDef.Mode.REPLACE );

Example 3.9. Step Scope

Pipe join =

new HashJoin( lhs, common, rhs, common, declared, new InnerJoin() );

SpillableProps props = SpillableProps.spillableProps()

.setCompressSpill( true )

.setMapSpillThreshold( 50 * 1000 );

props.setProperties( join.getStepConfigDef(), ConfigDef.Mode.DEFAULT );

As of Cascading 2.2, SubAssemblies can now be configured via the ConfigDef method.

Cascading supports pluggable planners that

allow it to execute on differing platforms. Planners are invoked by an

associated FlowConnector subclass. Currently,

only two planners are provided, as described below:

- LocalFlowConnector

-

The

cascading.flow.local.LocalFlowConnectorprovides a "local" mode planner for running Cascading completely in memory on the current computer. This allows for fast execution of Flows against local files or any other compatible customTapandSchemeclasses.The local mode planner and platform were not designed to scale beyond available memory, CPU, or disk on the current machine. Thus any memory-intensive processes that use

GroupBy,CoGroup, orHashJoinare likely to fail against moderately large files.Local mode is useful for development, testing, and interactive data exploration against sample sets.

- HadoopFlowConnector

-

The

cascading.flow.hadoop.HadoopFlowConnectorprovides a planner for running Cascading on an Apache Hadoop 1 cluster. This allows Cascading to execute against extremely large data sets over a cluster of computing nodes. - Hadoop2MR1FlowConnector

-

The

cascading.flow.hadoop2.Hadoop2MR1FlowConnectorprovides a planner for running Cascading on an Apache Hadoop 2 cluster. This class is roughly equivalent to the aboveHadoopFlowConnectorexcept it uses Hadoop 2 specific properties and is compiled against Hadoop 2 API binaries.

Cascading's support for pluggable planners allows a

pipe assembly to be executed on an arbitrary platform, using

platform-specific Tap and Scheme classes that hide the platform-related

I/O details from the developer. For example, Hadoop uses

org.apache.hadoop.mapred.InputFormat to read

data, but local mode is happy with a

java.io.FileInputStream. This detail is hidden

from developers unless they are creating custom Tap and Scheme

classes.

All input data comes in from, and all output data goes out to,

some instance of cascading.tap.Tap. A tap

represents a data resource - such as a file on the local file system, on

a Hadoop distributed file system, or on Amazon S3. A tap can be read

from, which makes it a source, or

written to, which makes it a sink.

Or, more commonly, taps act as both sinks and sources when shared

between flows.

The platform on which your application is running (Cascading local or Hadoop) determines which specific classes you can use. Details are provided in the sections below.

If the Tap is about where the data is and how to access it, the Scheme is about what the data is and how to read it. Every Tap must have a Scheme that describes the data. Cascading provides four Scheme classes:

- TextLine

-

TextLinereads and writes raw text files and returns tuples which, by default, contain two fields specific to the platform used. The first field is either the byte offset or line number, and the second field is the actual line of text. When written to, all Tuple values are converted to Strings delimited with the TAB character (\t). A TextLine scheme is provided for both the local and Hadoop modes.By default TextLine uses the UTF-8 character set. This can be overridden on the appropriate TextLine constructor.

- TextDelimited

-

TextDelimitedreads and writes character-delimited files in standard formats such as CSV (comma-separated variables), TSV (tab-separated variables), and so on. When written to, all Tuple values are converted to Strings and joined with the specified character delimiter. This Scheme can optionally handle quoted values with custom quote characters. Further, TextDelimited can coerce each value to a primitive type when reading a text file. A TextDelimited scheme is provided for both the local and Hadoop modes.By default TextDelimited uses the UTF-8 character set. This can be overridden on appropriate the TextDelimited constructor.

- SequenceFile

-

SequenceFileis based on the Hadoop Sequence file, which is a binary format. When written to or read from, all Tuple values are saved in their native binary form. This is the most efficient file format - but be aware that the resulting files are binary and can only be read by Hadoop applications running on the Hadoop platform. - WritableSequenceFile

-

Like the

SequenceFileScheme,WritableSequenceFileis based on the Hadoop Sequence file, but it was designed to read and write key and/or value HadoopWritableobjects directly. This is very useful if you have sequence files created by other applications. During writing (sinking), specified key and/or value fields are serialized directly into the sequence file. During reading (sourcing), the key and/or value objects are deserialized and wrapped in a Cascading Tuple object and passed to the downstream pipe assembly. This class is only available when running on the Hadoop platform.

There's a key difference between the

TextLine and

SequenceFile schemes. With the

SequenceFile scheme, data is stored as binary

tuples, which can be read without having to be parsed. But with the

TextLine option, Cascading must parse each line

into a Tuple before processing it, causing a

performance hit.

Depending on which platform you use (Cascading local or Hadoop), the classes you use to specify schemes will vary. Platform-specific details for each standard scheme are shown below.

Table 3.2. Platform-specific tap scheme classes

| Description | Cascading local platform | Hadoop platform |

| Package Name | cascading.scheme.local | cascading.scheme.hadoop |

| Read lines of text | TextLine | TextLine |

| Read delimited text (CSV, TSV, etc) | TextDelimited | TextDelimited |

| Cascading proprietary efficient binary | SequenceFile | |

External Hadoop application binary (custom

Writable type) | WritableSequenceFile |

The following sample code creates a new Hadoop FileSystem Tap that can read and write raw text files. Since only one field name is provided, the "offset" field is discarded, resulting in an input tuple stream with only "line" values.

Here are the most commonly-used tap types:

- FileTap

-

The

cascading.tap.local.FileTaptap is used with the Cascading local platform to access files on the local file system. - Hfs

-

The

cascading.tap.hadoop.Hfstap uses the current Hadoop default file system, when running on the Hadoop platform.If Hadoop is configured for "Hadoop local mode" (not to be confused with Cascading local mode), its default file system is the local file system. If configured for distributed mode, its default file system is typically the Hadoop distributed file system.

Note that Hadoop can be forced to use an external file system by specifying a prefix to the URL passed into a new Hfs tap. For instance, using "s3://somebucket/path" tells Hadoop to use the S3

FileSystemimplementation to access files in an Amazon S3 bucket. More information on this can be found in the Javadoc.

Also provided are six utility taps:

- MultiSourceTap

-

The

cascading.tap.MultiSourceTapis used to tie multiple tap instances into a single tap for use as an input source. The only restriction is that all the tap instances passed to a new MultiSourceTap share the same Scheme classes (not necessarily the same Scheme instance). - MultiSinkTap

-

The

cascading.tap.MultiSinkTapis used to tie multiple tap instances into a single tap for use as output sinks. At runtime, for every Tuple output by the pipe assembly, each child tap to the MultiSinkTap will sink the Tuple. - PartitionTap

-

The

cascading.tap.hadoop.PartitionTapandcascading.tap.local.PartitionTapare used to sink tuples into directory paths based on the values in the Tuple. More can be read below in Partition Taps. Note theTemplateTaphas been deprecated in favor of thePartitionTap. - GlobHfs

-

The

cascading.tap.hadoop.GlobHfstap accepts Hadoop style "file globbing" expression patterns. This allows for multiple paths to be used as a single source, where all paths match the given pattern. This tap is only available when running on the Hadoop platform. - DecoratorTap

-

The

cascading.tap.DecoratorTapis a utility helper for wrapping an existing Tap with new functionality, via sub-class, and/or adding 'meta-data' to a Tap instance via the genericMetaInfoinstance field. Further, on the Hadoop platform, planner created intermediate andCheckpointTaps can be wrapped by aDecoratorTapimplementation by the Cascading Planner. Seecascading.flow.FlowConnectorPropsfor details. - DistCacheTap

-

The

cascading.tap.hadoop.DistCacheTapis a sub-class of thecascading.tap.DecoratorTapthat can wrap an cascading.tap.hadoop.Hfs instance. It allows for writing to HDFS, but reading from the Hadoop Distributed Cache under the write circumstances, specifically if the Tap is being read into the small side of acascading.pipe.HashJoin.

Depending on which platform you use (Cascading local or Hadoop), the classes you use to specify file systems will vary. Platform-specific details for each standard tap type are shown below.

Table 3.3. Platform-specific details for setting file system

| Description | Either platform | Cascading local platform | Hadoop platform |

| Package Name | cascading.tap | cascading.tap.local | cascading.tap.hadoop |

| File access | FileTap | Hfs | |

| Multiple Taps as single source | MultiSourceTap | ||

| Multiple Taps as single sink | MultiSinkTap | ||

| Bin/Partition data into multiple files | PartitionTap | PartitionTap | |

| Pattern match multiple files/dirs | GlobHfs | ||

| Wrapping a Tap with MetaData / Decorating intra-Flow Taps | DecoratorTap | ||

| Reading from the Hadoop Distributed Cache | DistCacheTap |

Example 3.11. Overwriting An Existing Resource

Tap tap =

new Hfs( new TextLine( new Fields( "line" ) ), path, SinkMode.REPLACE );

All applications created with Cascading read data from one or more

sources, process it, then write data to one or more sinks. This is done

via the various Tap classes, where each class

abstracts different types of back-end systems that store data as files,

tables, blobs, and so on. But in order to sink data, some systems

require that the resource (e.g., a file) not exist before processing

thus must be removed (deleted) before the processing can begin. Other

systems may allow for appending or updating of a resource (typical with

database tables).

When creating a new Tap instance, a

SinkMode may be provided so that the Tap will

know how to handle any existing resources. Note that not all Taps

support all SinkMode values - for example, Hadoop

does not support appends (updates) from a MapReduce job.

The available SinkModes are:

SinkMode.KEEP-

This is the default behavior. If the resource exists, attempting to write over it will fail.

SinkMode.REPLACE-

This allows Cascading to delete the file immediately after the Flow is started.

SinkMode.UPDATE-

Allows for new tap types that can update or append - for example, to update or add records in a database. Each tap may implement this functionality in its own way. Cascading recognizes this update mode, and if a resource exists, will not fail or attempt to delete it.

Note that Cascading itself only uses

these labels internally to know when to automatically call

deleteResource() on the

Tap or to leave the Tap alone. It is up the the

Tap implementation to actually perform a write or

update when processing starts. Thus, when

start() or complete()

is called on a Flow, any sink

Tap labeled

SinkMode.REPLACE will have its

deleteResource() method called.

Conversely, if a

Flow is in a Cascade and

the Tap is set to

SinkMode.KEEP or

SinkMode.REPLACE,

deleteResource() will be called if and only if

the sink is stale (i.e., older than the source). This allows a

Cascade to behave like a "make" or "ant" build

file, only running Flows that should be run. For more information, see

Skipping Flows.

It's also important to understand how Hadoop deals with

directories. By default, Hadoop cannot source data from directories with

nested sub-directories, and it cannot write to directories that already

exist. However, the good news is that you can simply point the

Hfs tap to a directory of data files, and they

are all used as input - there's no need to enumerate each individual

file into a MultiSourceTap. If there are nested

directories, use GlobHfs.

Cascading applications can perform complex manipulation or "field

algebra" on the fields stored in tuples, using Fields sets, a feature of the

Fields class that provides a sort of wildcard

tool for referencing sets of field values.

These predefined Fields sets are constant values on the

Fields class. They can be used in many places

where the Fields class is expected. They are:

- Fields.ALL

-

The

cascading.tuple.Fields.ALLconstant is a wildcard that represents all the current available fields.// incoming -> first, last, age String expression = "first + \" \" + last"; Fields fields = new Fields( "full" ); ExpressionFunction full = new ExpressionFunction( fields, expression, String.class ); assembly = new Each( assembly, new Fields( "first", "last" ), full, Fields.ALL ); // outgoing -> first, last, age, full

- Fields.RESULTS

-

The

cascading.tuple.Fields.RESULTSconstant is used to represent the field names of the current operations return values. This Fields set may only be used as an output selector on a pipe, causing the pipe to output a tuple containing the operation results.// incoming -> first, last, age String expression = "first + \" \" + last"; Fields fields = new Fields( "full" ); ExpressionFunction full = new ExpressionFunction( fields, expression, String.class ); Fields firstLast = new Fields( "first", "last" ); assembly = new Each( assembly, firstLast, full, Fields.RESULTS ); // outgoing -> full

- Fields.REPLACE

-

The

cascading.tuple.Fields.REPLACEconstant is used as an output selector to inline-replace values in the incoming tuple with the results of an operation. This convenient Fields set allows operations to overwrite the value stored in the specified field. The current operation must either specify the identical argument selector field names used by the pipe, or use theARGSFields set.// incoming -> first, last, age // coerce to int Identity function = new Identity( Fields.ARGS, Integer.class ); Fields age = new Fields( "age" ); assembly = new Each( assembly, age, function, Fields.REPLACE ); // outgoing -> first, last, age

- Fields.SWAP

-

The

cascading.tuple.Fields.SWAPconstant is used as an output selector to swap the operation arguments with its results. Neither the argument and result field names, nor the size, need to be the same. This is useful for when the operation arguments are no longer necessary and the result Fields and values should be appended to the remainder of the input field names and Tuple.// incoming -> first, last, age String expression = "first + \" \" + last"; Fields fields = new Fields( "full" ); ExpressionFunction full = new ExpressionFunction( fields, expression, String.class ); Fields firstLast = new Fields( "first", "last" ); assembly = new Each( assembly, firstLast, full, Fields.SWAP ); // outgoing -> age, full

- Fields.ARGS

-

The

cascading.tuple.Fields.ARGSconstant is used to let a given operation inherit the field names of its argument Tuple. This Fields set is a convenience and is typically used when the Pipe output selector isRESULTSorREPLACE. It is specifically used by the Identity Function when coercing values from Strings to primitive types.// incoming -> first, last, age // coerce to int Identity function = new Identity( Fields.ARGS, Integer.class ); Fields age = new Fields( "age" ); assembly = new Each( assembly, age, function, Fields.REPLACE ); // outgoing -> first, last, age

- Fields.GROUP

-

The

cascading.tuple.Fields.GROUPconstant represents all the fields used as grouping key in the most recent grouping. If no previous grouping exists in the pipe assembly,GROUPrepresents all the current field names.// incoming -> first, last, age assembly = new GroupBy( assembly, new Fields( "first", "last" ) ); FieldJoiner full = new FieldJoiner( new Fields( "full" ), " " ); assembly = new Each( assembly, Fields.GROUP, full, Fields.ALL ); // outgoing -> first, last, age, full

- Fields.VALUES

-

The

cascading.tuple.Fields.VALUESconstant represents all the fields not used as grouping fields in a previous Group. That is, if you have fields "a", "b", and "c", and group on "a",Fields.VALUESwill resolve to "b" and "c".// incoming -> first, last, age assembly = new GroupBy( assembly, new Fields( "age" ) ); FieldJoiner full = new FieldJoiner( new Fields( "full" ), " " ); assembly = new Each( assembly, Fields.VALUES, full, Fields.ALL ); // outgoing -> first, last, age, full

- Fields.UNKNOWN

-

The

cascading.tuple.Fields.UNKNOWNconstant is used when Fields must be declared, but it's not known how many fields or what their names are. This allows for processing tuples of arbitrary length from an input source or some operation. Use this Fields set with caution.// incoming -> line RegexSplitter function = new RegexSplitter( Fields.UNKNOWN, "\t" ); Fields fields = new Fields( "line" ); assembly = new Each( assembly, fields, function, Fields.RESULTS ); // outgoing -> unknown

- Fields.NONE

-

The

cascading.tuple.Fields.NONEconstant is used to specify no fields. Typically used as an argument selector for Operations that do not process any Tuples, likecascading.operation.Insert.// incoming -> first, last, age Insert constant = new Insert( new Fields( "zip" ), "77373" ); assembly = new Each( assembly, Fields.NONE, constant, Fields.ALL ); // outgoing -> first, last, age, zip

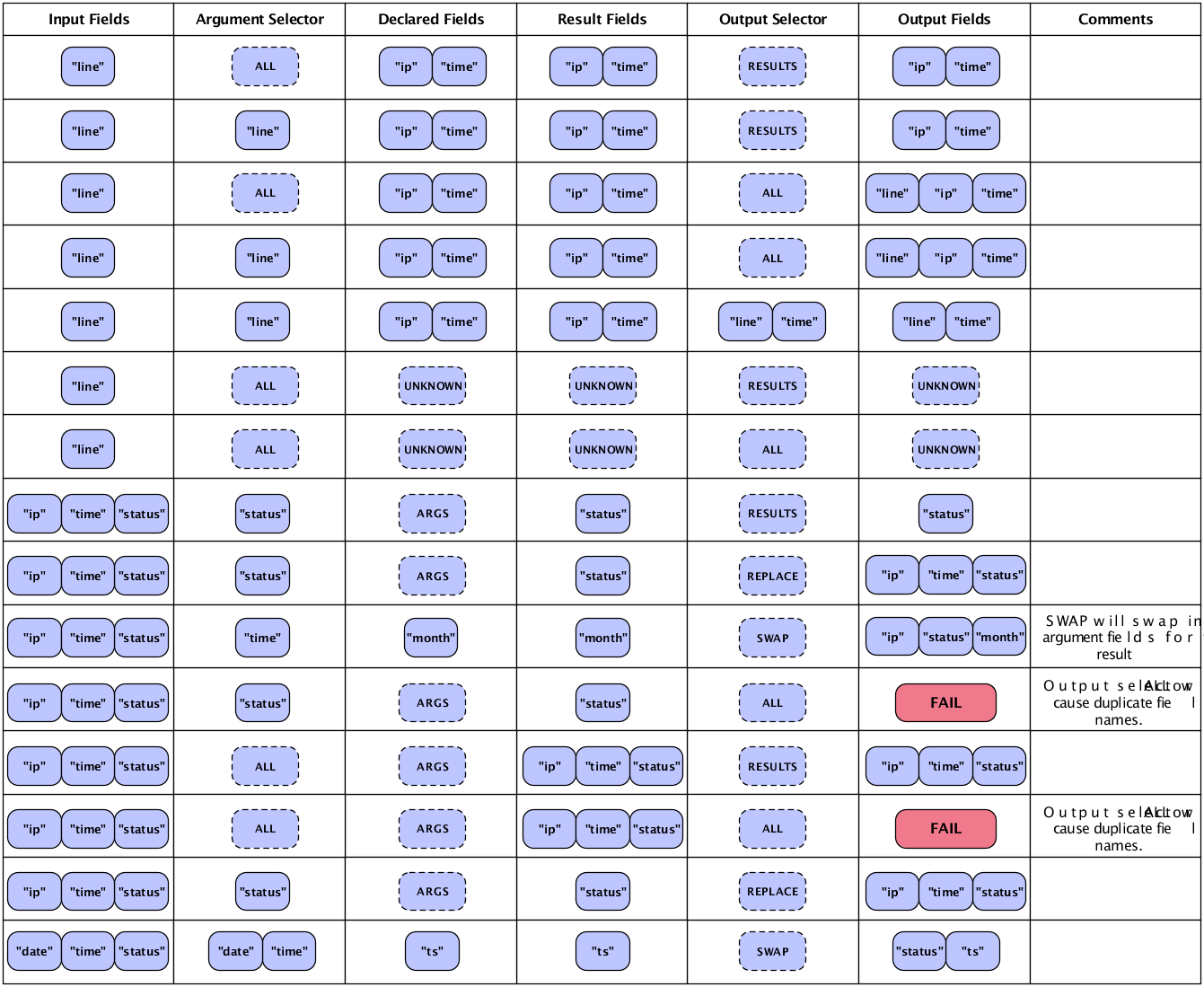

The chart below shows common ways to merge input and

result fields for the desired output fields. A few minutes with this

chart may help clarify the discussion of fields, tuples, and pipes. Also

see Each and Every Pipes for details on the different columns

and their relationships to the Each and

Every pipes and Functions, Aggregators, and

Buffers.

When pipe assemblies are bound to source and sink taps, a

Flow is created. Flows are executable in the

sense that, once they are created, they can be started and will execute

on the specified platform. If the Hadoop platform is specified, the Flow

will execute on a Hadoop cluster.

A Flow is essentially a data processing pipeline that reads data from sources, processes the data as defined by the pipe assembly, and writes data to the sinks. Input source data does not need to exist at the time the Flow is created, but it must exist by the time the Flow is executed (unless it is executed as part of a Cascade - see Cascades for more on this).

The most common pattern is to create a Flow from an existing pipe

assembly. But there are cases where a MapReduce job (if running on

Hadoop) has already been created, and it makes sense to encapsulate it

in a Flow class so that it may participate in a

Cascade and be scheduled with other

Flow instances. Alternatively, via the Riffle

annotations, third-party applications can participate in a

Cascade, and complex algorithms that result in

iterative Flow executions can be encapsulated as a single Flow. All

patterns are covered here.

Example 3.12. Creating a new Flow

HadoopFlowConnector flowConnector = new HadoopFlowConnector();

Flow flow =

flowConnector.connect( "flow-name", source, sink, pipe );

To create a Flow, it must be planned though one of the

FlowConnector subclass objects. In Cascading, each platform (i.e.,

local and Hadoop) has its own connectors. The connect()

method is used to create new Flow instances based on a set of sink

taps, source taps, and a pipe assembly. Above is a trivial example

that uses the Hadoop mode connector.

Example 3.13. Binding taps in a Flow

// the "left hand side" assembly head

Pipe lhs = new Pipe( "lhs" );

lhs = new Each( lhs, new SomeFunction() );

lhs = new Each( lhs, new SomeFilter() );

// the "right hand side" assembly head

Pipe rhs = new Pipe( "rhs" );

rhs = new Each( rhs, new SomeFunction() );

// joins the lhs and rhs

Pipe join = new CoGroup( lhs, rhs );

join = new Every( join, new SomeAggregator() );

Pipe groupBy = new GroupBy( join );

groupBy = new Every( groupBy, new SomeAggregator() );

// the tail of the assembly

groupBy = new Each( groupBy, new SomeFunction() );

Tap lhsSource = new Hfs( new TextLine(), "lhs.txt" );

Tap rhsSource = new Hfs( new TextLine(), "rhs.txt" );

Tap sink = new Hfs( new TextLine(), "output" );

FlowDef flowDef = new FlowDef()

.setName( "flow-name" )

.addSource( rhs, rhsSource )

.addSource( lhs, lhsSource )

.addTailSink( groupBy, sink );

Flow flow = new HadoopFlowConnector().connect( flowDef );

The example above expands on our previous pipe assembly example by creating multiple source and sink taps and planning a Flow. Note there are two branches in the pipe assembly - one named "lhs" and the other named "rhs". Internally Cascading uses those names to bind the source taps to the pipe assembly. New in 2.0, a FlowDef can be created to manage the names and taps that must be passed to a FlowConnector.

The FlowConnector constructor accepts the

java.util.Property object so that default

Cascading and any platform-specific properties can be passed down

through the planner to the platform at runtime. In the case of Hadoop,

any relevant Hadoop *-default.xml properties may be

added. For instance, it's very common to add

mapred.map.tasks.speculative.execution,

mapred.reduce.tasks.speculative.execution, or

mapred.child.java.opts.

One of the two properties that must always be set for production applications is the application Jar class or Jar path.

Example 3.14. Configuring the Application Jar

Properties properties = new Properties();

// pass in the class name of your application

// this will find the parent jar at runtime

properties = AppProps.appProps()

.setName( "sample-app" )

.setVersion( "1.2.3" )

.addTags( "deploy:prod", "team:engineering" )

.setJarClass( Main.class ) // find jar from class

.buildProperties( properties ); // returns a copy

// ALTERNATIVELY ...

// pass in the path to the parent jar

properties = AppProps.appProps()

.setName( "sample-app" )

.setVersion( "1.2.3" )

.addTags( "deploy:prod", "team:engineering" )

.setJarPath( pathToJar ) // set jar path

.buildProperties( properties ); // returns a copy

// pass properties to the connector

FlowConnector flowConnector = new HadoopFlowConnector( properties );

More information on packaging production applications can be found in Executing Processes.

Since the FlowConnector can be reused,

any properties passed on the constructor will be handed to all the

Flows it is used to create. If Flows need to be created with different

default properties, a new FlowConnector will need to be instantiated

with those properties, or properties will need to be set on a given

Pipe or Tap instance

directly - via the getConfigDef() or

getStepConfigDef() methods.

When a Flow participates in a

Cascade, the

Flow.isSkipFlow() method is consulted before

calling Flow.start() on the flow. The result is

based on the Flow's skip strategy.

By default, isSkipFlow() returns true if any

of the sinks are stale - i.e., the sinks don't exist or the resources

are older than the sources. However, the strategy can be changed via

the Flow.setFlowSkipStrategy() and

Cascade.setFlowSkipStrategy() method, which can

be called before or after a particular Flow

instance has been created.

Cascading provides a choice of two standard skip strategies:

- FlowSkipIfSinkNotStale

-

This strategy -

cascading.flow.FlowSkipIfSinkNotStale- is the default. Sinks are treated as stale if they don't exist or the sink resources are older than the sources. If the SinkMode for the sink tap is REPLACE, then the tap is treated as stale. - FlowSkipIfSinkExists

-

The

cascading.flow.FlowSkipIfSinkExistsstrategy skips the Flow if the sink tap exists, regardless of age. If theSinkModefor the sink tap isREPLACE, then the tap is treated as stale.

Additionally, you can implement custom skip strategies by using

the interface

cascading.flow.FlowSkipStrategy.

Note that Flow.start() does not consult

the isSkipFlow() method, and consequently

always tries to start the Flow if called. It is up to the user code to

call isSkipFlow() to determine whether the

current strategy indicates that the Flow should be skipped.

If a MapReduce job already exists and needs to be managed by a

Cascade, then the

cascading.flow.hadoop.MapReduceFlow class

should be used. To do this, after creating a Hadoop

JobConf instance simply pass it into the

MapReduceFlow constructor. The resulting

Flow instance can be used like any other

Flow.

Any custom Class can be treated as a Flow if given the correct

Riffle

annotations. Riffle is a set of Java annotations that identify

specific methods on a class as providing specific life-cycle and

dependency functionality. For more information, see the Riffle

documentation and examples. To use with Cascading, a Riffle-annotated

instance must be passed to the

cascading.flow.hadoop.ProcessFlow constructor

method. The resulting ProcessFlow instance can

be used like any other Flow instance.

Since many algorithms need to perform multiple passes over a given data set, a Riffle-annotated Class can be written that internally creates Cascading Flows and executes them until no more passes are needed. This is like nesting Flows or Cascades in a parent Flow, which in turn can participate in a Cascade.

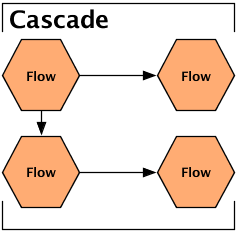

A Cascade allows multiple Flow instances to be executed as a single logical unit. If there are dependencies between the Flows, they are executed in the correct order. Further, Cascades act like Ant builds or Unix make files - that is, a Cascade only executes Flows that have stale sinks (i.e., output data that is older than the input data). For more on this, see Skipping Flows.

Example 3.15. Creating a new Cascade

CascadeConnector connector = new CascadeConnector();

Cascade cascade = connector.connect( flowFirst, flowSecond, flowThird );

When passing Flows to the CascadeConnector, order is not important. The CascadeConnector automatically identifies the dependencies between the given Flows and creates a scheduler that starts each Flow as its data sources become available. If two or more Flow instances have no interdependencies, they are submitted together so that they can execute in parallel.

For more information, see the section on Topological Scheduling.

If an instance of

cascading.flow.FlowSkipStrategy is given to a

Cascade instance (via the

Cascade.setFlowSkipStrategy() method), it is

consulted for every Flow instance managed by that Cascade, and all skip

strategies on those Flow instances are ignored. For more information on

skip strategies, see Skipping Flows.

Table of Contents

This section covers some of the operational mechanics of running an application that uses Cascading with the Hadoop platform, including building the application jar file and configuring the operating mode.

To use the HadoopFlowConnector (i.e., to

run in Hadoop mode), Cascading requires that Apache Hadoop be installed

and correctly configured. Hadoop is an Open Source Apache project,

freely available for download from the Hadoop website, http://hadoop.apache.org/core/.

Cascading ships with several jars and dependencies in the download archive. Alternatively, Cascading is available over Maven and Ivy through the Conjars repository, along with a number of other Cascading-related projects. See http://conjars.org for more information.

The core Cascading artifacts include the following:

- cascading-core-2.6.x.jar

-

This jar contains the Cascading Core class files. It should be packaged with

lib/*.jarwhen using Hadoop. - cascading-local-2.6.x.jar

-

This jar contains the Cascading local mode class files. It is not needed when using Hadoop.

- cascading-hadoop-2.6.x.jar

-

This jar contains the Cascading Hadoop 1 specific dependencies. It should be packaged with

lib/*.jarwhen using Hadoop. - cascading-hadoop2-mr1-2.6.x.jar

-

This jar contains the Cascading Hadoop 2 specific dependencies. It should be packaged with

lib/*.jarwhen using Hadoop. - cascading-xml-2.6.x.jar

-

This jar contains Cascading XML module class files and is optional. It should be packaged with

lib/xml/*.jarwhen using Hadoop.

Cascading works with either of the Hadoop processing modes - the

default local standalone mode and the distributed cluster mode. As

specified in the Hadoop documentation, running in cluster mode requires

the creation of a Hadoop job jar that includes the Cascading jars, plus

any needed third-party jars, in its lib directory.

This is true regardless of whether they are Cascading Hadoop-mode

applications or raw Hadoop MapReduce applications.

During runtime, Hadoop must be told which application jar file

should be pushed to the cluster. Typically, this is done via the Hadoop

API JobConf object.

Cascading offers a shorthand for configuring this parameter, demonstrated here:

Properties properties = new Properties();

// pass in the class name of your application

// this will find the parent jar at runtime

properties = AppProps.appProps()

.setName( "sample-app" )

.setVersion( "1.2.3" )

.addTags( "deploy:prod", "team:engineering" )

.setJarClass( Main.class ) // find jar from class

.buildProperties( properties ); // returns a copy

// ALTERNATIVELY ...

// pass in the path to the parent jar

properties = AppProps.appProps()

.setName( "sample-app" )

.setVersion( "1.2.3" )

.addTags( "deploy:prod", "team:engineering" )

.setJarPath( pathToJar ) // set jar path

.buildProperties( properties ); // returns a copy

// pass properties to the connector

FlowConnector flowConnector = new HadoopFlowConnector( properties );

Above we see two ways to set the same property - via the

setJarClass() method, and via the

setJarPath() method. One is based on a Class

name, and the other is based on a literal path.

The first method takes a Class object that owns the "main"

function for this application. The assumption here is that

Main.class is not located in a Java Jar that is stored in

the lib folder of the application Jar. If it is,

that Jar is pushed to the cluster, not the parent application

jar.

The second method simply sets the path to the Java Jar as a property.

In your application, only one of these methods needs to be called, but one of them must be called to properly configure Hadoop.

Example 4.1. Configuring the Application Jar with a JobConf

JobConf jobConf = new JobConf();

// pass in the class name of your application

// this will find the parent jar at runtime

jobConf.setJarByClass( Main.class );

// ALTERNATIVELY ...

// pass in the path to the parent jar

jobConf.setJar( pathToJar );

// build the properties object using jobConf as defaults

Properties properties = AppProps.appProps()

.setName( "sample-app" )

.setVersion( "1.2.3" )

.addTags( "deploy:prod", "team:engineering" )

.buildProperties( jobConf );

// pass properties to the connector

FlowConnector flowConnector = new HadoopFlowConnector( properties );

Above we are starting with an existing Hadoop

JobConf instance and building a Properties object

with it as the default.